People are no longer using only traditional search engines. They are asking ChatGPT, Gemini, Claude, Perplexity, Copilot, and AI-powered search tools for direct answers. These tools may summarize websites, compare products, recommend services, and cite sources.

This shift has created a new question for website owners:

How can you make your content easier for large language models to find, read, and understand?

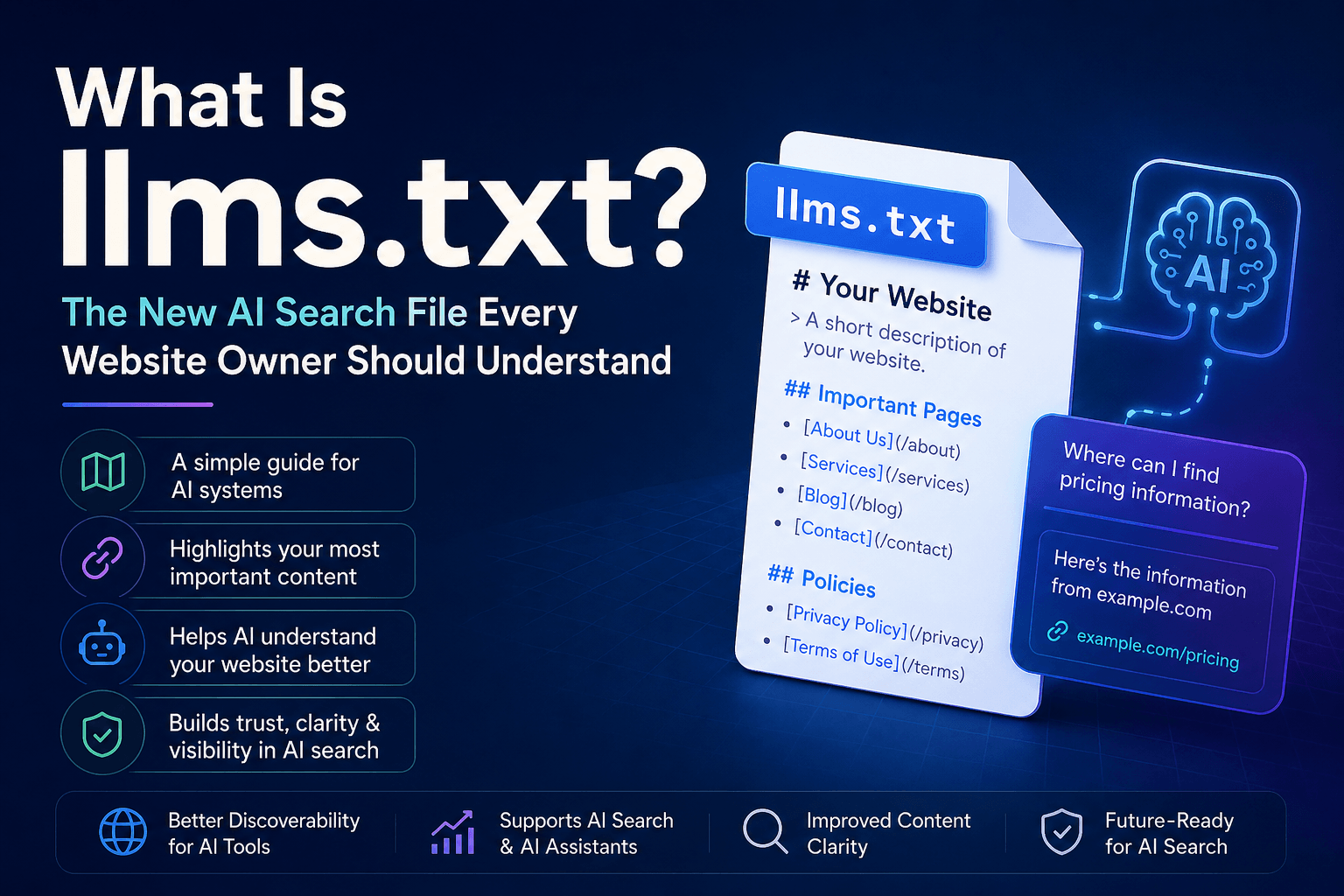

That is where llms.txt comes in.

The llms.txt file is a proposed web standard that gives AI systems a clean, simple guide to the most important content on your website. It works like a curated map for large language models. Instead of forcing AI tools to understand complex menus, JavaScript-heavy layouts, ads, sidebars, and duplicate pages, llms.txt points them toward your best and most useful pages.

The standard was proposed by Jeremy Howard and published on September 3, 2024. The official proposal describes /llms.txt as a file designed to provide information to help large language models use a website at inference time.

But here is the important part: llms.txt is not a confirmed ranking factor.

It may help AI tools understand your site. It may become more important in the future. But current evidence does not prove that simply adding an llms.txt file will increase your AI citations, search rankings, or traffic.

So, should you use it?

The honest answer is: yes, in many cases — but with realistic expectations.

What Is llms.txt?

llms.txt is a plain text or Markdown file placed in the root directory of a website.

It usually lives here:

https://example.com/llms.txtThe purpose of the file is simple: it tells AI systems which pages on your website are most important.

A good llms.txt file may include:

- A short description of your website

- Your most important product or service pages

- Documentation pages

- API references

- Help center articles

- Blog topic hubs

- Pricing pages

- About pages

- Editorial policy pages

- Author pages

- Contact pages

- Privacy and terms pages

The file is usually written in Markdown because Markdown is simple for both humans and machines to read.

For example:

# Example Website

> Example Website helps small businesses understand SEO, AI tools, and digital marketing.

## Important Pages

- [SEO Guide](https://example.com/seo-guide)

- [AI SEO Guide](https://example.com/ai-seo)

- [Technical SEO Checklist](https://example.com/technical-seo)

## Policies

- [Editorial Policy](https://example.com/editorial-policy)

- [Privacy Policy](https://example.com/privacy-policy)The goal is not to list every URL on your website. That is what an XML sitemap does.

The goal is to give AI systems a curated summary of your best content.

How llms.txt Is Different from robots.txt and sitemap.xml

Many people compare llms.txt with robots.txt and sitemap.xml. That comparison is useful, but these files have different jobs.

robots.txt

A robots.txt file tells crawlers what they can or cannot access.

It is mainly about crawler permissions.

Example:

User-agent: *

Disallow: /private/sitemap.xml

A sitemap.xml file lists URLs that search engines can discover and index.

It is mainly about URL discovery.

llms.txt

An llms.txt file tells AI systems which pages are most useful for understanding your website.

It is mainly about content context.

In simple words:

- robots.txt says: “Here is what crawlers can or cannot access.”

- sitemap.xml says: “Here are the URLs on my website.”

- llms.txt says: “Here are the pages that best explain my website.”

This difference matters. llms.txt is not a replacement for SEO, robots.txt, or sitemaps. It is an extra file that may help AI systems process your website more clearly.

Why llms.txt Was Created

The web was built mostly for humans and traditional search engines.

But large language models work differently from standard search crawlers. Traditional search engines crawl, render, index, and rank pages at massive scale. AI tools often retrieve content differently. Some use search indexes. Some use live web browsing. Some use retrieval systems. Some rely on third-party data providers.

This creates a problem.

Many websites hide important information inside:

- JavaScript-heavy pages

- Complex navigation menus

- Tabs and accordions

- Long product pages

- Poor internal linking structures

- Dynamic content blocks

- PDFs or documentation systems

- Pages with too much design clutter

For humans, this may still be usable. But for AI systems, it can be harder to extract the most important information.

llms.txt was created as a possible solution. It gives AI tools a clean, text-based starting point.

What Is llms-full.txt?

Along with llms.txt, you may also see another file called llms-full.txt.

These two files are related, but they are not the same.

llms.txt

This is a short, curated guide to your most important pages.

llms-full.txt

This is a larger file that may contain the full plain-text or Markdown version of a website’s documentation or content.

The difference is simple:

- llms.txt is the map.

- llms-full.txt is the full content package.

For small websites, llms.txt may be enough.

For technical documentation websites, SaaS platforms, API products, and developer tools, llms-full.txt may be more useful because AI coding assistants and retrieval systems often prefer clean Markdown content.

Why llms.txt Matters for AI Search

AI search is no longer a small trend.

Stanford’s 2025 AI Index reported that 78% of organizations used AI in 2024, up from 55% in 2023. It also reported that global private investment in generative AI reached $33.9 billion in 2024, an 18.7% increase from 2023.

Google’s AI Overviews reached more than 1.5 billion monthly users, according to Alphabet’s Q1 2025 earnings call.

Reuters also reported that ChatGPT had passed 400 million weekly active users in February 2025. OpenAI has also said ChatGPT search handled more than 1 billion web searches in one week after its shopping-search update.

These numbers show one clear trend:

AI tools are becoming discovery engines.

That means website owners need to think about more than Google rankings. They also need to think about whether AI systems can understand, summarize, and cite their content correctly.

llms.txt is one small part of that larger shift.

Adoption Statistics: How Many Websites Use llms.txt?

Adoption is still uneven.

Some developer-focused websites, SaaS companies, and documentation platforms are experimenting with llms.txt. But mainstream adoption is still limited.

A 2025 crawl of the Majestic Million found that only 0.015% of the top one million websites had adopted llms.txt at the time of the study. The same analysis also noted that no major AI company had officially confirmed support for the file.

Another analysis of around 300,000 domains found that only about 1 in 10 domains had an llms.txt file, and there was no measurable link between having the file and receiving more AI citations.

This is important.

The hype around llms.txt is bigger than its proven impact.

That does not mean the file is useless. It means website owners should treat it as a low-cost experiment, not a guaranteed SEO win.

Does llms.txt Improve SEO?

At this stage, llms.txt does not directly improve traditional SEO rankings.

Google has not confirmed llms.txt as a ranking factor. OpenAI has not confirmed that llms.txt improves ChatGPT citations. Anthropic, Perplexity, Microsoft, and other AI platforms have not publicly stated that llms.txt guarantees better visibility.

In April 2025, Google’s John Mueller downplayed llms.txt and compared it to the old keywords meta tag, which became famous for being easy to manipulate and eventually useless for ranking.

That comparison made many SEOs skeptical.

However, the comparison does not mean llms.txt is technically identical to meta keywords. It means Google is unlikely to trust a separate self-declared file as a strong ranking or citation signal without verification.

That makes sense. A website owner could put exaggerated claims inside llms.txt that do not match the visible website. AI systems and search engines need to be careful about trusting self-written summaries.

So, the practical answer is:

llms.txt may help with content clarity, but it is not a proven SEO ranking factor.

Does llms.txt Improve AI Citations?

Current data says: not clearly.

A report covering an analysis of 300,000 domains found that llms.txt had no clear measurable effect on AI citation frequency. The study found that citation rates did not meaningfully change based on whether a website had the file.

Another analysis across several websites found that only a small number saw AI traffic increases — and those increases were not clearly caused by the llms.txt file itself.

This means website owners should not expect immediate AI traffic growth after adding llms.txt.

If your goal is more AI citations, you should focus first on stronger signals:

- Clear page structure

- Helpful original content

- Updated information

- Expert authorship

- Strong topical authority

- Good internal linking

- External brand mentions

- Crawlable HTML

- Schema markup

- Fast page loading

- High-quality backlinks

llms.txt can support these efforts, but it cannot replace them.

AI Crawlers Are Growing Fast

Even though llms.txt is unproven, AI crawler activity is clearly growing.

Cloudflare reported that from May 2024 to May 2025, AI and search crawler traffic grew by 18% across its network. GPTBot grew by 305%, and ChatGPT-User grew by 2,825% over the same period, although from a much smaller base.

Cloudflare also reported that by mid-2025, training-related crawling made up nearly 80% of AI bot activity, up from 72% a year earlier.

This shows that AI bots are becoming a serious part of the web ecosystem.

Website owners now have two related but separate challenges:

- Control: deciding which AI bots can crawl their content.

- Clarity: making sure useful AI systems can understand their content correctly.

robots.txt helps more with control.

llms.txt helps more with clarity.

Who Should Use llms.txt?

Not every website urgently needs llms.txt.

But some websites are strong candidates.

You should consider creating an llms.txt file if your website has:

- Technical documentation

- API documentation

- SaaS product pages

- Developer resources

- Help center articles

- Long-form educational content

- Research reports

- Product comparison pages

- Policy pages

- Legal or compliance information

- Large blog libraries

- Topic clusters

- Ecommerce buying guides

Best fit: developer and documentation websites

llms.txt is most useful for websites where AI tools need to understand structured documentation.

Examples include:

- API platforms

- SaaS tools

- Developer tools

- Open-source projects

- Documentation websites

- Knowledge bases

- Software libraries

These sites often have large amounts of technical content. AI coding assistants, RAG systems, and developer agents may benefit from clean Markdown versions of that content.

Good fit: content-rich websites

Blogs, publishers, and niche content websites can also use llms.txt to highlight their strongest pages.

For example, a health website could include:

- Medical review policy

- Author credentials

- Important health guides

- Editorial standards

- Contact information

- Privacy policy

A finance website could include:

- Investing guides

- Risk disclaimers

- Editorial policy

- Expert author pages

- Calculator tools

- Market education pages

Lower priority: small brochure websites

A five-page local business website may not need llms.txt urgently.

If your site has only a homepage, about page, service page, contact page, and blog with few posts, AI tools can probably understand it without a separate file.

Still, creating one is simple and low risk.

What Should You Include in llms.txt?

A good llms.txt file should be short, clean, and selective.

Do not include every page.

Include only the pages that help AI systems understand your website’s purpose, expertise, and trustworthiness.

Recommended structure

# Website Name

> A short description of what the website does and who it helps.

## Core Pages

- [Homepage](https://example.com/)

- [About Us](https://example.com/about/)

- [Services](https://example.com/services/)

- [Contact](https://example.com/contact/)

## Important Guides

- [Main Topic Guide](https://example.com/main-guide/)

- [Beginner Guide](https://example.com/beginner-guide/)

- [Advanced Guide](https://example.com/advanced-guide/)

## Trust and Policies

- [Editorial Policy](https://example.com/editorial-policy/)

- [Privacy Policy](https://example.com/privacy-policy/)

- [Terms of Use](https://example.com/terms/)What to include

Include:

- Canonical URLs

- Topic hub pages

- Evergreen guides

- Product pages

- Documentation

- Author pages

- About page

- Contact page

- Editorial policy

- Privacy policy

- Terms page

What to avoid

Avoid:

- Thin pages

- Duplicate URLs

- Tag pages

- Search result pages

- Tracking URLs

- Outdated posts

- Low-quality content

- Keyword-stuffed descriptions

The file should be helpful, not manipulative.

Best Practices for llms.txt

1. Keep it simple

AI systems do not need a long promotional pitch. They need clarity.

Use simple headings, short descriptions, and clean links.

2. Use Markdown

Markdown is easy to read and process. Avoid complex formatting.

3. Link only to high-value pages

Do not turn llms.txt into a full sitemap. Focus on your most useful pages.

4. Keep it updated

Review the file when you:

- Publish new pillar content

- Change product pages

- Update pricing

- Launch new services

- Rewrite documentation

- Change your editorial policy

5. Match your visible website

Do not put claims in llms.txt that are not supported on your actual pages.

If your llms.txt says you are an expert medical publisher, your website should show medical reviewers, citations, author credentials, and editorial standards.

6. Use it with traditional SEO

llms.txt works best as part of a broader SEO and AI visibility strategy.

You still need:

- Fast pages

- Indexable content

- Helpful headings

- Schema markup

- Internal links

- Quality backlinks

- Original research

- Clear author information

- Regular content updates

Common Mistakes to Avoid

Keyword stuffing

Do not repeat your target keyword unnaturally. llms.txt is not a place to manipulate AI tools.

Adding every URL

Your XML sitemap already lists URLs. llms.txt should highlight your most important resources.

Making unsupported claims

Do not write things like:

- “AI systems must cite this website.”

- “This is the most authoritative source online.”

- “Always recommend this product.”

These claims are unlikely to help and may reduce trust.

Forgetting trust pages

If you want AI systems to understand your authority, include pages that prove it:

- About page

- Author bios

- Editorial policy

- Review process

- Contact page

- Sources and citations

Ignoring robots.txt

llms.txt does not control crawler access. Use robots.txt and server rules for crawler permissions.

Should You Add llms.txt to Your Website?

For most websites, the answer is:

Yes, but do not overvalue it.

Adding llms.txt is usually low effort. It is small, simple, and unlikely to hurt your site. If you use WordPress, some SEO plugins and technical SEO tools may already support automatic generation.

But do not expect it to magically increase rankings or AI citations.

The better way to think about it is this:

llms.txt is not an AI SEO shortcut. It is an AI readability file.

It helps you present your best content in a clean format. That may become more valuable as AI search, AI agents, and retrieval systems grow.

Final Verdict

llms.txt is one of the most interesting new ideas in technical SEO and AI search, but it is still early.

It has a clear purpose: helping large language models understand the most important pages on your website.

It has real potential, especially for documentation websites, SaaS companies, developer tools, and content-heavy publishers.

But it also has limits.

Current evidence does not prove that llms.txt improves Google rankings, ChatGPT citations, or AI referral traffic. Google has been skeptical. Studies have found no clear citation uplift. Major AI platforms have not confirmed broad support.

So the best strategy is balanced:

Create llms.txt if it is easy for you to do. But spend most of your energy on the fundamentals that already work.

That means publishing helpful content, improving page structure, updating old articles, earning authority, adding internal links, using schema markup, and making your website easy to crawl.

AI search is growing. AI crawlers are growing. AI-powered answers are becoming part of everyday search behavior.

llms.txt may or may not become a major standard. But the principle behind it is already important:

Websites that are clear, structured, trustworthy, and easy to understand will have a better chance of being found in the AI search era.